Digital Visual Studies · 2024

An AI Image of the Imagined Image of AI

A companion piece to Material Configurations of AI-Generated Texts — where that paper opens the machine to examine how AI text materially emerges, this one asks what it means that AI consistently imagines itself the same way.

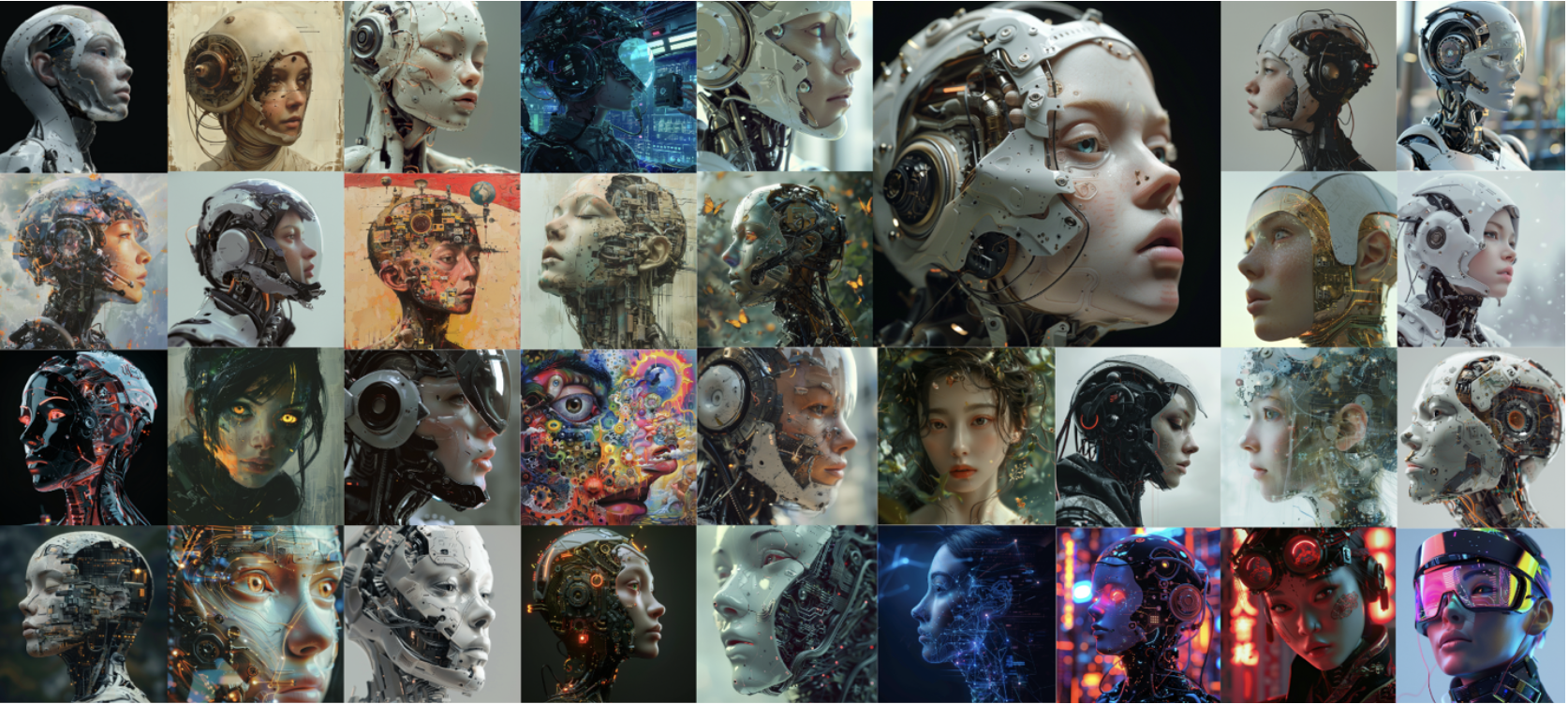

If you type /imagine Artificial Intelligence in Midjourney's Discord server, you would probably get an image of a robot with a female white face, and you might think, "Yeah, that's about right." It might not stray too far from how you imagined AI. At least it's not giving you a picture of a cat or a plate of pancakes.

Yet, upon repeating this process a hundred times, a striking pattern emerges: anthropomorphized, feminized, and presented as side profiles. These images exhibit high visual repetitiveness, which implies underlying socio-political issues. The reasons we imagine AI in this manner are shaped by historical and cultural antecedents. Similarly, the data that determine how generative image models visualize AI are also produced within the same contexts. The limited variability in AI's representation holds further negative societal consequences, determined not only by how we imagine data but by the logic of generative models actively foreclosing political potential.

The Argument

The paper traces three overlapping patterns in AI's visual representation: anthropomorphization, feminization, and the prevalence of side profiles back to their historical and sociocultural roots. From Alan Turing's Imitation Game to Ovid's Pygmalion, the imaginary of AI has always been entangled with the imaginary of the human, and specifically of the woman as a synthetic subordinate.

Generative image models do not invent these representations. They inherit and amplify them. Trained on data produced within the same cultural contexts, they create a feedback loop: biased inputs produce biased outputs, which become new data, foreclosing the possibility of transformation. The limited variability in how AI is depicted is not a technical accident. It is a political condition.

Aura, Reproducibility & the Paradigm Shift

Beyond the sociopolitical critique, these images also demand a rethinking of what kind of art object they actually are.

The paper turns to Walter Benjamin's framework of aura and technological reproducibility to ask this question. AI-generated images are neither copies nor originals, they are simulacra of an aggregated cultural concept, each output unique yet none traceable to a single author or origin. Aura, in this context, resides not in the image itself but in the opaque, unpredictable interplay between human prompting and machine generation.

This does not grant generative models inherent political power. Their democratizing potential is real but partial, constrained by the same biases encoded in their training data, and by the asymmetry of power between the corporations that control data extraction and the users who type the prompts.

Just as we make AI human, we make it a woman.

Full Paper

The complete paper includes extended analysis of the historical feminization of robots, close readings of Benjamin and Offert, and a discussion of the political limits of generative image models as art objects. Unpublished manuscript.

↓ Download PDF